I almost spent the whole day debugging and trying to find the reason, why a plain simple LXC setup on Ubuntu 14..04 LTS wasn't working correctly.

LXC itself was working fine, the container was created. But as soon as the container wanted to communicate with other servers in the same subnet, every connection attempt failed. Even the arp address could not be determinated.

On the LXC host, which is a virtual machine running on a VMware ESXi host, a very basic network configuration using the single network interface (eth0) as bridge was defined:

# The primary network interface

iface eth0 inet manual

# Virtual bridge

auto virbr0

iface virbr0 inet static

address 192.168.168.51

netmask 255.255.255.0

network 192.168.168.0

broadcast 192.168.168.255

gateway 192.168.168.1

# bridge control

bridge_ports eth0

bridge_fd 0

pre-up brctl addbr virbr0

pre-down brctl delbr virbr0

# dns-* options are implemented by the resolvconf package, if installed

dns-nameservers 192.168.168.10

dns-search claudiokuenzler.com

The LXC configuration file (/var/lib/lxc/mylxc/config) was using this defined virbr0 as link:

# Network configuration

lxc.network.type = veth

lxc.network.flags = up

lxc.network.link = virbr0

lxc.network.hwaddr = 00:FF:AA:00:00:53

lxc.network.ipv4 = 192.168.168.53/24

lxc.network.ipv4.gateway = 192.168.168.1

But after I started the container and tried to ping it from other servers in this subnet, I never got a response. In general, all network traffic did not work. Within the container I was not even able to ping the gateway.

By using tcpdump I however saw continous requests to find out the MAC address for the IP 192.168.168.53:

tcpdump host 192.168.168.53

14:20:51.672635 ARP, Request who-has 192.168.168.53 tell 192.168.168.52, length 46

14:20:51.672679 ARP, Reply 192.168.168.53 is-at 0a:2a:2a:d0:8f:00 (oui Unknown), length 28

14:20:51.743872 ARP, Request who-has 192.168.168.53 tell 192.168.168.52, length 46

14:20:51.743910 ARP, Reply 192.168.168.53 is-at 0a:2a:2a:d0:8f:00 (oui Unknown), length 28

14:20:51.774195 ARP, Request who-has 192.168.168.11 tell 192.168.168.53, length 28

14:20:52.673736 ARP, Request who-has 192.168.168.53 tell 192.168.168.52, length 46

14:20:52.673781 ARP, Reply 192.168.168.53 is-at 0a:2a:2a:d0:8f:00 (oui Unknown), length 28

14:20:52.761621 ARP, Request who-has 192.168.168.53 tell 192.168.168.52, length 46

14:20:52.761668 ARP, Reply 192.168.168.53 is-at 0a:2a:2a:d0:8f:00 (oui Unknown), length 28

14:20:52.774229 ARP, Request who-has 192.168.168.11 tell 192.168.168.53, length 28

On the remote server (192.168.168.52) the MAC address was never received (marked as incomplete):

root@192.168.168.52:~# arp

Address HWtype HWaddress Flags Mask Iface

192.168.168.53 (incomplete) eth0

192.168.168.12 ether 00:1c:7f:61:38:35 C eth0

192.168.168.1 ether 02:00:5e:37:41:01 C eth0

At first I suspected arp issues when the container is running in the same subnet as the host. In all my previous setups the LXC host usually had an IP address of a different network than the containers, so this could be it. But all hints, configuration modifications and changing the kernel parameters for arp handling did not resolve the problem. After hours of research and debugging, I changed the focus of my search terms from something like "lxc same subnet as host" or "lxc bridge arp incomplete" to more VMware specific. The keywords "running lxc in vmware network issue" pointed to me an interesting thread which did point me to the correct information: promiscuous mode.

According to VMware KB 1002934, the following description explains what happens on ESXi's virtual switch level:

Promiscuous mode is a security policy which can be defined at the virtual switch or portgroup level in vSphere ESX/ESXi.

A virtual machine, Service Console or VMkernel network interface in a portgroup which allows use of promiscuous mode can see all network traffic traversing the virtual switch.

By default, a guest operating system's virtual network adapter only receives frames that are meant for it.

Placing the guest's network adapter in promiscuous mode causes it to receive all frames passed on the virtual switch that are allowed under the VLAN policy for the associated portgroup.

That's exactly the case with LXC. As the virtual machine is a LXC host it is therefore also a bridge for its containers. As long as the promiscuous mode is disabled (which seems to be the VMware default), then the containers cannot do any communication. This also explains why the arp answers of the LXC host were never "saved" from requesters but still being asked for several times per second.

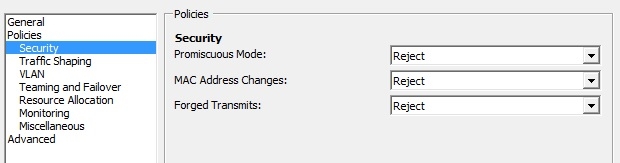

I checked the current settings on the virtual distributed switch (in vSphere client -> Inventory -> Networking -> Right click on the "managed distributed port group" which is used by the VM (LXC host) -> Edit Settings -> Security:

No big surprise - promiscuous mode is rejected. Which explains everything. I already thought I was going nuts after so many LXC installations (on physical machines though) and suddenly a very basic LXC setup wouldn't work anymore...

Update May 12th 2015:

It turned out setting Promiscuous Mode to Accept was not enough. The setting of "Forged Transmits" also needed to be set to Accept. After having set both Promiscuous Mode and Forged Transmits to Accept, the network communication with the containers worked.

Kristian Kirilov from Sofia wrote on Feb 16th, 2016:

Thanks a lot!

AWS Android Ansible Apache Apple Atlassian BSD Backup Bash Bluecoat CMS Chef Cloud Coding Consul Containers CouchDB DB DNS Databases Docker ELK Elasticsearch Filebeat FreeBSD Galera Git GlusterFS Grafana Graphics HAProxy HTML Hacks Hardware Icinga Influx Internet Java KVM Kibana Kodi Kubernetes LVM LXC Linux Logstash Mac Macintosh Mail MariaDB Minio MongoDB Monitoring Multimedia MySQL NFS Nagios Network Nginx OSSEC OTRS Observability Office OpenSearch PHP Perl Personal PostgreSQL PowerDNS Proxmox Proxy Python Rancher Rant Redis Roundcube SSL Samba Seafile Security Shell SmartOS Solaris Surveillance Systemd TLS Tomcat Ubuntu Unix VMware Varnish Virtualization Windows Wireless Wordpress Wyse ZFS Znuny Zoneminder